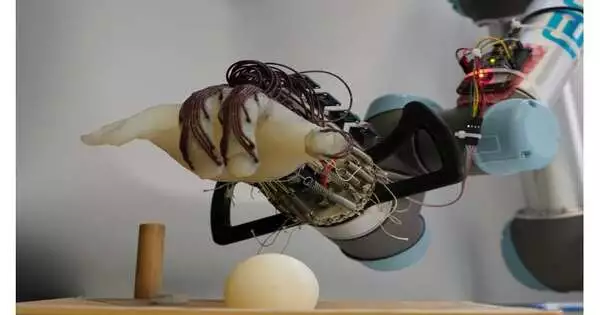

Researchers have created a low-cost, energy-efficient robotic hand that is capable of grasping a variety of objects—and not dropping them—using just the movement of its wrist and the sensation in its “skin.”

A human can easily grasp objects of various sizes, shapes, and textures, but a robot finds it difficult to do so. University of Cambridge researchers created a soft, 3D-printed robotic hand that is capable of a variety of intricate movements despite the inability of its fingers to move independently.

The robot hand was programmed to pick up various objects, and it was able to anticipate whether it would drop them by using data from sensors attached to its “skin.”

“Our lab has previously demonstrated that a robot hand can have a sizable range of motion only by adjusting the wrist. We were interested in testing whether a robot hand based on passive movement could not only grip objects but also predict whether or not it would drop them and adjust in response.”

Dr. Thomas George-Thuruthel, who is now based at University College London (UCL) East.

Compared to robots with fully motorized fingers, this type of passive movement makes the robot much easier to control and much more energy-efficient. According to the researchers, low-cost robotics with more natural movement and the ability to learn to grasp a variety of objects could be created using their adaptable design. In the journal Advanced Intelligent Systems, the findings are reported.

A robot’s hand merely moves its wrist to pick up a peach.

Movement is a result of the brain and body working together in the natural world. This makes it possible for both humans and animals to move in complex ways while conserving energy. Recent developments in 3D printing technologies have made it possible for researchers to add complexity to straightforward, energy-efficient systems, which has paved the way for the incorporation of soft components into robotics design.

The human hand is incredibly complex, and it is extremely difficult to replicate all of its dexterity and adaptability in a robot. Most of today’s sophisticated robots can’t easily perform manipulation tasks that small children can. Humans, for instance, naturally know how much force to apply when picking up an egg, but this presents a challenge for robots because too much force could cause the egg to break while too little force could cause the robot to drop it. Additionally, a fully functional robot hand that has motors in each joint and finger also consumes a lot of energy.

Researchers in Cambridge’s Department of Engineering have been working on potential solutions to both issues, including a robot hand that can grasp a variety of objects with the proper amount of pressure while consuming a small amount of energy.

According to co-author Dr. Thomas George-Thuruthel, who is currently based at University College London (UCL) East, “in earlier experiments, our lab has shown that it’s possible to get a significant range of motion in a robot hand just by moving the wrist.”. “We wanted to test whether a robot hand based on passive movement could not only grasp objects but also be able to anticipate whether it would drop the objects and adjust in accordance with that prediction.”.

To enable the hand to feel what it was touching, the researchers used a 3D-printed anthropomorphic hand that had tactile sensors implanted in it. The only movement the hand could make was passive, wrist-based movement.

The team tested the robot’s hand more than 1,200 times to see how well it could grasp small objects without dropping them. Small 3D-printed plastic balls were used to train the robot to grasp them using a pre-defined action learned from human demonstrations.

This type of hand “has a bit of springiness to it: it can pick things up by itself without any actuation of the fingers,” said the first author, Dr. Kieran Gilday, who is now based at EPFL in Lausanne, Switzerland. The robot is able to tell when its grip is beginning to slip thanks to the tactile sensors, which also provide feedback on grip strength. This aids in its ability to foresee when things will fail.

In order to determine the best grip, the robot experimented with various styles. It first tried to grasp a peach, a computer mouse, and a roll of bubble wrap after completing the training with the balls. 11 out of 14 objects that were tested were successfully grasped by the hand.

Researchers have created a low-cost, energy-efficient robotic hand that can grasp a variety of objects—and not drop them—by simply moving its wrist and using its “skin” as a sense organ. Credit: University of Cambridge

George-Thuruthel explained that the sensors, which resemble the skin of the robot, “measure the pressure being applied to the object.”. “We are unsure of the exact information the robot is receiving, but it should be able to estimate where and how strongly the object has been grasped.”.

The robot “learns that a combination of a particular motion and a particular set of sensor data will lead to failure, which makes it a customizable solution,” said Gilday. The hand is very straightforward, but it can pick up numerous objects using the same method.”.

The wide range of motion we can achieve without any actuators is the main benefit of this design, according to Iida. “We want to make the hand as simple as we can. Without any actuators, we can obtain a great deal of useful information and a high degree of control, which will allow us to produce more complex behavior in a more effective manner once we do add them.”.

In addition to the energy it consumes, a fully actuated robotic hand presents a challenging control issue. The Cambridge hand’s passive design, which relies on a limited number of sensors, makes it simpler to control, offers a full range of motion, and speeds up learning.

The system could be improved in the future in a number of ways, such as by incorporating computer vision capabilities or by teaching the robot to take advantage of its surroundings so that it can grasp a wider variety of objects.

More information: Predictive Learning of Error Recovery with a Sensorised Passivity-based Soft Anthropomorphic Hand, Advanced Intelligent Systems (2023). DOI: 10.1002/aisy.202200390