Our recollections are filled with detail: we can vividly recall the color of our house, the layout of our kitchen, or the front of our favorite café. Neuroscientists have long been baffled as to how the brain encodes this information.

In a new Dartmouth study, researchers discovered a neural coding process that permits information to be transferred between perceptual and memory regions of the brain. The findings are reported in Nature Neuroscience.

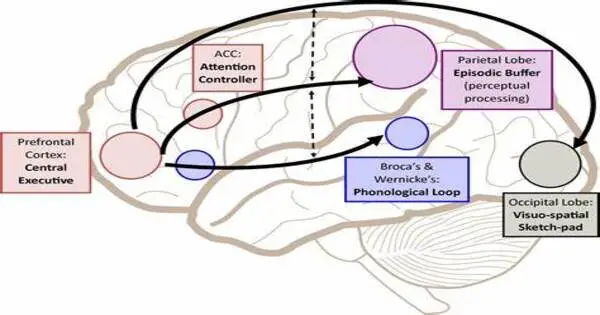

Prior to this discovery, the standard theory of brain organization was that perceptual parts of the brain represent the world “as it is,” whereas the brain’s visual cortex represents the external world based on how light falls on the retina, or “retinotopically.” In contrast, it was considered that the brain’s memory centers represent information in an abstract form, free of physical features. However, the co-authors argue that this explanation fails to account for the fact that when information is encoded or retrieved, multiple regions in the brain may share a common coding.

Our results provide a clear example of how shared visual information is used by memory systems to bring recalled memories in and out of focus.

Ed Silson

“We found that memory-related brain areas encode the world like a ‘photographic negative’ in space,” says co-lead author Adam Steel, a postdoctoral researcher in the Department of Psychological and Brain Sciences and fellow in the Neukom Institute for Computational Science at Dartmouth. “And that ‘negative’ is part of the mechanics that move information in and out of memory, and between perceptual and memory systems.”

In a series of trials, participants’ perception and memory were assessed while their brain activity was monitored with a functional magnetic resonance imaging (fMRI) scanner. The researchers discovered an opposing push-pull coding process that affects the interaction of perceptual and memory areas in the brain.

The findings revealed that when light strikes the retina, visual parts of the brain respond by increasing activity to reflect the pattern of light. Memory centers in the brain respond to visual stimuli, but unlike visual areas, their neural activity declines when processing the same visual pattern.

The co-authors report that the study has three unusual findings. The first is their discovery that a visual coding principle is preserved in memory systems. The second is that this visual code is upside-down in memory systems. “When you see something in your visual field, neurons in the visual cortex are driving while those in the memory system are quieted,” says senior author Caroline Robertson, an assistant professor of psychological and brain sciences at Dartmouth.

Third, the relationship changes during memory recall. “If you close your eyes and remember that visual stimuli in the same space, you’ll flip the relationship: your memory system will be driving, suppressing the neurons in perceptual regions,” Robertson said.

“Our results provide a clear example of how shared visual information is used by memory systems to bring recalled memories in and out of focus,” says Ed Silson, co-lead author and human cognitive neuroscience lecturer at the University of Edinburgh.

Moving forward, the team intends to investigate how this push-pull dynamic between perception and memory may contribute to difficulties in clinical disorders, such as Alzheimer’s.