An innovative algorithm for neural network-based optimal data transfer between domains has been created by researchers from Skoltech and the Artificial Intelligence Research Institute (AIRI). The new technique can be trained on independent datasets from the input and output domains, unlike the majority of comparable methods that need paired training datasets, such as input-output samples. Compared to other existing methods, the algorithm yields a more understandable result and is theoretically sound.

Large training datasets, which are difficult to come by, are needed by contemporary machine learning models for applications like face or speech recognition and medical image analysis. Because of this, scientists and engineers must produce synthetic data that closely resembles the real-world data that is already available. Generative models, which have recently made enormous strides in terms of text and image quality, greatly simplify the task.

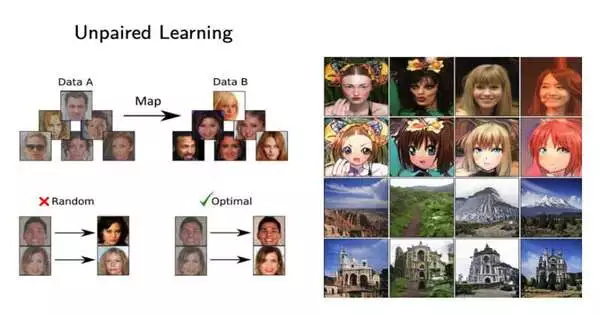

In other words, generative models assist in transferring knowledge from one domain to another. A neural network, for instance, can transform a human-drawn sketch into a digital image or improve the fine details in a satellite image. These tasks typically call for paired training samples and input-output image sets that a neural network learns to generalize and extend to fresh incoming images, which, among other things, helps deal with multiple identical images of varying quality.

It is more challenging for researchers to produce high-quality results when they must make due with independent datasets because paired data is typically very expensive or difficult to obtain.

“Standard approaches to developing generative models for domain transfer are largely heuristic and reliant on numerous hyperparameters that influence training outcomes and are difficult to select. Such methods also lack a solid mathematical foundation. Model training is therefore an unstable process that requires manual adjustment. Prof. Evgeny Burnaev, director of AI at Skoltech and head of the research team at AIRI, said that it is not surprising that it is challenging to draw rigorous theoretical conclusions about the training results.

In their investigation, the group revisited the works of Soviet economist and mathematician Leonid Kantorovich and used his theories on the best method for moving goods (the optimal transport theory) to develop a brand-new algorithm for arranging the best data transfer between domains. Deep neural networks and separate datasets are used in the new algorithm, called Neural Optimal Transport.

When tested on unpaired domain transfer, the algorithm outperforms existing techniques in a variety of tasks, including image styling.Additionally, in contrast to other techniques, it relies on sound mathematical principles, produces a more interpretable result, and requires fewer hyperparameters, which are typically tricky to tune.

“Generative neural networks have been constructed using optimal transport numerical methods for many years. Our exploratory research revealed that these models have a very high error rate when calculating optimal transport. Alexander Korotin, an AIRI research scientist and the team leader at Skoltech, said, “We have not only been able to identify the causes of the error, but we have also been able to perform analysis and develop fundamentally novel efficient methods to build generative models for unpaired domain transfer based on optimal transport theory.

The study is posted on the arXiv preprint server.

More information: Alexander Korotin et al, Neural Optimal Transport, arXiv (2022). DOI: 10.48550/arxiv.2201.12220