According to new research from the University of Birmingham, autistic people’s ability to accurately identify facial expressions is influenced by the speed at which the expression is created as well as its severity. Autistic people, in particular, have a lower ability to distinguish anger from facial emotions expressed at a normal ‘real world’ speed. The researchers also discovered that all expressions appeared more deeply emotional in persons with a similar disease, alexithymia.

Face expressions are interpreted differently by people with autism spectrum disorder. More information regarding how this occurs has been disclosed by researchers. They created anomalies in a neural network model to investigate the consequences on brain learning development.

Autism spectrum disorder patients have trouble interpreting facial emotions. Tohoku University researchers have discovered how this happens by using a neural network model that simulates the brain on a computer. The findings were reported in the journal Scientific Reports.

By observing facial expressions, humans may identify various emotions such as grief and rage. However, little is known about how we learn to detect distinct emotions based on visual cues from facial expressions.

Yuta Takahashi

“By observing facial expressions, humans may identify various emotions such as grief and rage. However, little is known about how we learn to detect distinct emotions based on visual cues from facial expressions “Yuta Takahashi, one of the paper’s coauthors, stated as much. “It’s also unclear what changes happen in this process that cause persons with autism spectrum disorder to struggle to read facial emotions.”

To learn more, the research team applied predictive processing theory. The brain, according to this hypothesis, constantly predicts the next sensory event and adapts when its prediction is incorrect. Sensory information, such as facial expressions, aids in prediction error reduction.

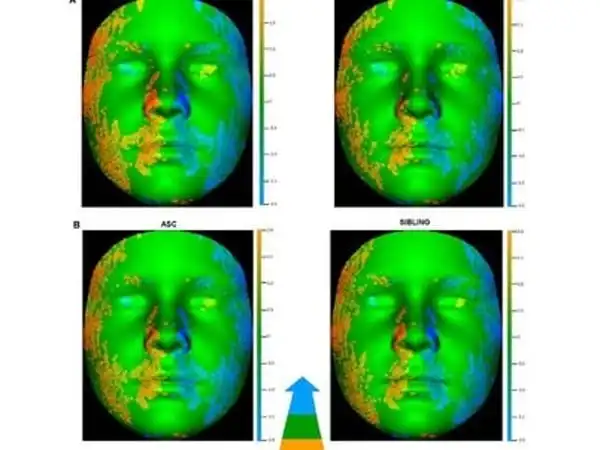

The predictive processing theory was incorporated into the artificial neural network model, which replicated the developmental process by learning to predict how portions of the face will move in movies of facial expression. Following that, the clusters of emotions self-organized into the higher level neuron space of the neural network model – despite the model not knowing which emotion the facial expression in the video belongs to.

The model was able to generalize unknown facial expressions that were not taught to it, recreating facial part movements and decreasing prediction errors.

Following that, the researchers carried out tests and created anomalies in the activity of neurons to explore the consequences on learning development and cognitive qualities. When the variety of activity in the neuronal population was reduced in the model, the ability to generalize was similarly diminished, and therefore the creation of emotional clusters in higher-level neurons was suppressed. This resulted in an inability to identify the emotion of unknown facial expressions, a condition akin to autism spectrum illness.

According to Takahashi, the study demonstrated that predictive processing theory, when combined with a neural network model, may explain emotion identification from facial expressions. “We hope to gain a better understanding of how humans learn to perceive emotions and the cognitive characteristics of people with autism spectrum disorder,” Takahashi stated. “The research will aid in the development of appropriate intervention approaches for persons who have difficulty identifying emotions.”

A crucial characteristic that the study discovered was unique to people with alexithymia was a proclivity to view expressions as profoundly emotional. People with alexithymia, on the other hand, were more likely to give the expressions greater right and wrong emotion ratings. For example, those with alexithymia would score a happy expression as more deeply happy and an angry or sad expression as more intensely furious or sad than those without alexithymia.

“One theory is that people with alexithymia are less able to evaluate the intensity of emotional expressions and are more prone to become confused about which emotion is being expressed,” Connor explains.

“Everyone will know or meet someone with autism at some time in their lives,” he continues. We may begin to build training and other interventions for both autistic and non-autistic persons to overcome some of the hurdles to successful interaction by better understanding how people with autism perceive and understand the world.”

One disadvantage of the study is that many individuals who began the online facial recognition exam did not complete it; the authors remark that this happened with 30% of people with autism. The researchers assume that this is because people are less likely to complete a repeating exam online at home than they are in a lab under supervision. Another disadvantage of online testing is that the researchers did not have a validated clinical diagnosis of autism.