Automobiles that move quickly We adore them by the millions. The thought rises above public lines, race, religion, and legislative issues. From the stately Stutz Bearcat and Mercer Raceabout (known as “the Steinway of the automobile world” in the early 1900s) to the sexy Pontiac GTOs and Ford Mustangs of the 1960s and the ultimate luxury creations of the Lamborghini and Ferrari families, we embraced them for more than a century.

Michael Bay, the director of “Transformers,” has stated, “Fast cars are my only vice.” Bay is well-versed in outrageous automobiles. Many would concur.

The assessment of life in the fast lane made by award-winning race car driver Parnelli Jones would also be enthusiastically supported by die-hard racing fans: In the event that you’re in charge, you’re not going quickly enough.”

Presently, mechanical vehicles are jumping in and letting loose.

“We believe that our work can serve as a first step toward RL-based systems learning complicated and highly effective navigation skills in a variety of domains by resolving these restrictions.”

Graduate student robotics researcher Kyle Stachowicz..

The University of California at Berkeley’s researchers claim to have created the first system that trains small-scale robotic cars to drive at high speeds on their own while adjusting to and improving in real-world conditions.

Kyle Stachowicz, a graduate student researching robotics, stated, “Our system, FastRLAP, trains autonomously in the real world without human interventions and without requiring any simulation or expert demonstrations.”

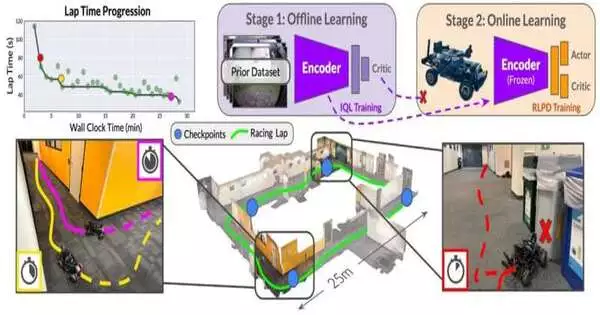

He framed parts he and his group utilized in their examination, which are currently accessible on the arXiv preprint server. The initialization phase, which generates data about various driving environments, comes first. The primary objective of a model car is collision avoidance, not speed, and it is manually driven along a variety of courses. The vehicle needn’t bother with being the very one that at last figures out how to drive quickly.

A robotic car is put on a course it needs to learn after an extensive dataset covering a wide range of routes has been compiled. After completing a preliminary lap to establish a perimeter, the vehicle sets out on its own. With the dataset in hand, reinforcement learning (RL) algorithms are used to train the vehicle to navigate the course more effectively over time, avoiding obstacles and improving its efficiency by adjusting its speed and direction.

Finding that the robotic cars could learn to speed through racing courses in less than 20 minutes surprised researchers, they said.

As per Stachowicz, the outcomes “display rising forceful driving abilities, for example, timing slowing down and speed increasing around turns and staying away from regions that hinder the robot’s movement.” The expertise shown by the mechanical vehicle “moves toward the presentation of a human driver utilizing a comparable first-individual point of interaction throughout the span of preparation.”

The concept of the “racing line” is one example of a skill that the vehicle acquires.

The mechanical vehicle finds “a smooth way through the lap, boosting its speed through sharp corners,” Stachowicz said. “To shorten the amount of time it takes to drive, the robot learns to maintain its speed until it reaches the apex, then abruptly brakes to turn and accelerates out of the corner.

“Drifting into the corner to achieve fast rotation without braking during the turn” is another illustration of how the vehicle learns to slightly oversteer when turning on a surface with low friction.

According to Stachowicz, the system will need to address safety concerns in the future. Currently, avoiding collisions is rewarded solely for preventing task failure. It does not use safety precautions like being cautious in unfamiliar settings.

He stated, “We hope that addressing these limitations will enable RL-based systems to learn complex and highly performant navigation skills in a wide range of domains, and we believe that our work can provide a stepping stone toward this.” “We believe that our work can provide a stepping stone toward this,” he added.

The researchers “feel the need for speed,” just like the character “Maverick,” played by Tom Cruise in “Top Gun.” Up until this point, they’ve been doing great.

More information: Kyle Stachowicz et al, FastRLAP: A System for Learning High-Speed Driving via Deep RL and Autonomous Practicing, arXiv (2023). DOI: 10.48550/arxiv.2304.09831