The image of a big mechanical robot speaking in monotone and moving in sluggish, methodical movements is difficult to dismiss. Soft robotics practitioners, on the other hand, envision autonomous systems comprised of compliant elements that are delicate to the touch, more closely approximating human fingers than R2-D2 or Robby the Robot.

Professor Edward Adelson and his Perceptual Science Group at MIT’s Computer Science and Artificial Intelligence Laboratory are now pursuing this idea (CSAIL). In a recent study, Adelson and Sandra Liu, a mechanical engineering Ph.D. student at CSAIL, constructed a robotic gripper using unique “GelSight Fin Ray” fingers that, like the human hand, are supple enough to manipulate items in a robotic gripper. What distinguishes this work from others in the field is that Liu and Adelson equipped their grippers with touch sensors that match or exceed the sensitivity of human skin.

Their findings were presented last week at the IEEE 5th International Conference on Soft Robotics in 2022.

The fin ray has become a popular item in soft robotics as a result of a 1997 discovery by German biologist Leif Kniese. When he put his finger against a fish’s tail, the ray would bend toward the applied force, virtually embracing his finger rather than tilting away. Although the design has gained popularity, it lacks tactile sensitivity. “It’s adaptable because it can adjust passively to varied shapes and hence grip a range of items,” Liu says. However, to go above and beyond what others in the area had done, we set out to add a rich tactile sensor to our gripper.

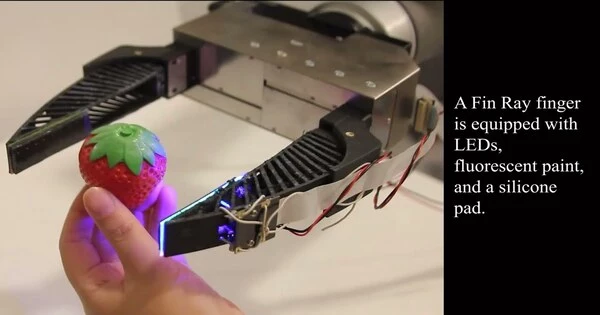

The gripper is made up of two flexible fin ray fingers that conform to the contour of the object with which they come into contact. The fingers are constructed from flexible plastic materials created on a 3D printer, which is very common in the area. However, normal soft robotic gripper fingers feature supportive cross-struts along the length of their interiors, whereas Liu and Adelson hollowed out the inside region to accommodate their camera and other sensing components.

The camera is attached to a semirigid backing at one end of the hollowed-out cavity, which is lit by LEDs. The camera is positioned in front of a layer of “sensory” pads made of silicone gel (dubbed “GelSight”) attached to a thin layer of acrylic material. In turn, the acrylic sheet is secured to the plastic finger piece at the other end of the inner hollow. When you touch something, your finger will fluidly fold around it, merging with the shape of the object. The camera, coupled with associated computational algorithms, can assess the general shape of the object, its surface roughness, its position in space, and the force applied by (and given to) each finger by calculating how the silicone and acrylic sheets are distorted during this encounter.

“It’s versatile because it can passively adapt to different shapes and therefore grasp a variety of objects. But in order to go beyond what others in the field had already done, we set out to incorporate a rich tactile sensor into our gripper.”

Liu explains.

Liu and Adelson put their grippers to the test in an experiment in which only one of the two fingers was “sensorized.” A mini-screwdriver, a plastic strawberry, an acrylic paint tube, a Ball Mason jar, and a wine glass were all successfully handled by their system. The internal sensor, for example, was able to detect the “seeds” on the surface of the fake strawberry while the gripper was gripping it. The fingers grasped the paint tube without squeezing it so hard that it ruptured and spilled its contents.

The GelSight sensor could even read the letters on the Mason jar, and it did so in an ingenious way. First, the overall shape of the jar was determined by observing how the acrylic sheet twisted when wrapped around it. A computer algorithm then removed that pattern from the deformation of the silicone pad, leaving only the more modest deformation caused by the letters.

Because of the refraction of light, glass objects provide a challenge to vision-based robots. Tactile sensors are not affected by such optical ambiguity. When the gripper picked up the wine glass, it could sense the stem orientation and ensure that the glass was pointed straight up before carefully lowering it. The gel pad detected contact when the base made contact with the tabletop. Proper placement was achieved in seven out of ten trials, and happily, no glass was harmed during the experiment’s shooting.

Wenzhen Yuan, an assistant professor in Carnegie Mellon University’s Robotics Institute who was not involved in the research, says, “Sensing with soft robots has been a huge challenge, because it is difficult to set up sensors—which are normally rigid—on soft bodies.” “This study offers a simple solution to that difficulty. The authors developed a clever design to make their vision-based sensor function for the compliant gripper, resulting in very good results when robots grasp objects or interact with their surroundings. The technology has a great deal of potential for usage in real-world robotic grippers.”

The GelSight Fin Ray has numerous potential applications, according to Liu and Adelson, but they are first considering certain enhancements. They produced structural instability, a tendency to twist, by hollowing out the finger to make room for their sensing system, which they feel may be mitigated by better design. They hope to create GelSight sensors that will work with soft robots developed by other research teams. They also intend to create a three-fingered gripper that might be useful for tasks such as picking up pieces of fruit and determining their ripeness.

Tactile sensing in their approach is based on low-cost components such as a camera, gel, and LEDs. Liu believes that using technology like GelSight, “it may be possible to develop sensors that are both practical and inexpensive.” That is, at least, one goal she and her colleagues in the lab are aiming for.