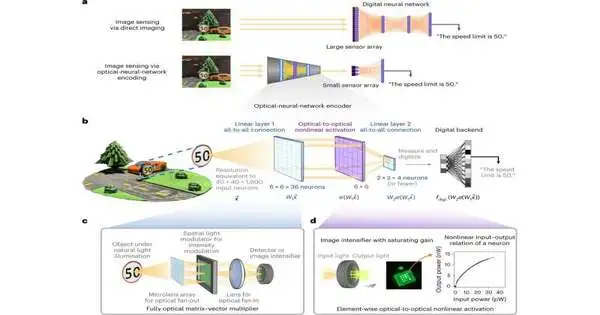

An optical neural network (ONN) that can filter relevant information from a scene before the visual image is detected by a camera has been developed by Cornell researchers. This method may make it possible to construct image sensors that are smaller, faster, and use less energy.

Researchers in the lab of assistant professor of applied and engineering physics Peter McMahon at Cornell Engineering have been able to demonstrate that ONN pre-processors can achieve compression ratios of up to 800-to-1—the equivalent of compressing a 1,600-pixel input to just 4 pixels—while still enabling high accuracy across several representative computer-vision tasks. This research was published in Nature Photonics and is titled “Image Sensing with Multilayer, Nonlinear Optical Neural Networks.”

The ONN image sensor was tested with machine-vision benchmarks, used to classify cell images in flow cytometers, and further demonstrated its ability to measure and identify objects in 3D scenes under the direction of doctoral student Mandar Sohoni and Eric and Wendy Schmidt AI in Science Postdoctoral Fellow Tianyu Wang.

“Our setup employs an optical neural network, in which the light entering the sensor is first processed through a series of matrix-vector multiplications that compress data to the smallest size required—in this case, four pixels.”

Tianyu Wang

The distinction between computerized frameworks and an optical brain network is that with advanced frameworks, pictures are first saved and then shipped off to a computerized electronic processor that removes data. Such electronic handling is power-consuming and, all the more significantly, calls for undeniably greater investment for the information to be handled and deciphered.

Wang stated, “Our setup uses an optical neural network, in which the light entering the sensor is first processed through a series of matrix-vector multiplications that compresses data to the minimum size required—in this case, four pixels.” This is comparable to how our eyes work: we pay attention to and remember the most important aspects of what we see, but not all of the minor details. An ONN can quickly sort out important information by removing irrelevant or redundant data, resulting in a compressed representation of the original data that may have a higher signal-to-noise ratio per camera pixel.

The gathering likewise tried reproducing the first picture using the information created by ONN encoders that were prepared exclusively to group the picture.

Wang stated, “The reconstructed images retained significant features, indicating that the compressed data contained more information than just the classification.” Although not perfect, this was an exciting result because it suggests that the ONN could produce more accurate results with improved models and better training.”

Because cancer cells must be isolated from millions or billions of other cells, Wang and Sohoni believe their work could be used in areas like early cancer detection research. In a microfluidic flow channel, cells move quickly past a detector with flow cytometry. An ONN that has been prepared to recognize the actual attributes of the malignant growth cells can quickly distinguish and confine those cells.

“You need to process probably 100 million cells to generate a robust sample of cells that would hold up to statistical analysis,” Sohoni stated. In this present circumstance, the test is quite certain, and an optical brain organization can be prepared to permit the identifier to handle those cells rapidly, which will produce a bigger, better dataset.”

According to Sohoni, ONNs can also be useful when sensing or computing requires very little power. A low-power device would be needed, for instance, for image sensing on a satellite in space. Because the event cameras are only activated when the input signal changes, they can be used in conjunction with ONNs’ ability to compress temporal information in this scenario.

More information: Tianyu Wang et al, Image sensing with multilayer nonlinear optical neural networks, Nature Photonics (2023). DOI: 10.1038/s41566-023-01170-8