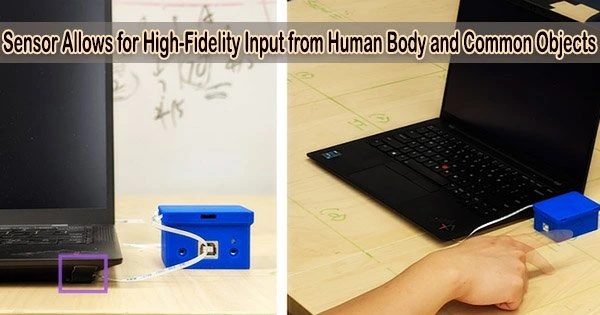

With the use of a new sensor technology created at the University of Michigan, everything may become a high-fidelity input device for computers, including couches, tables, sleeves, and more.

The system uses the Voice Pickup Units (VPUs), new bone-conduction microphones that exclusively pick up acoustic waves that move along surfaces of things. It functions well in noisy settings, along unusual surfaces like toys and arms, and on delicate materials like upholstery and apparel.

The device, known as SAWSense after the surface acoustic waves it uses, has a 97% accuracy rate when identifying various inputs including taps, scratches, and swipes. In one demonstration, the team used a normal table to replace a laptop’s trackpad.

“This technology will enable you to treat, for example, the whole surface of your body like an interactive surface,” said Yasha Iravantchi, U-M doctoral candidate in computer science and engineering. “If you put the device on your wrist, you can do gestures on your own skin. We have preliminary findings that demonstrate this is entirely feasible.”

Taps, swipes, and other gestures send acoustic waves along the surfaces of materials. The system then uses machine learning to categorize these waves, turning every touch into a reliable set of inputs. The technology won best paper honors after being presented last week at the 2023 Conference on Human Factors in Computing Systems.

“As more objects continue to incorporate smart or connected technology, designers are faced with a number of challenges when trying to give them intuitive input mechanisms. This results in a lot of clunky incorporation of input methods such as touch screens, as well as mechanical and capacitive buttons,” Iravantchi says. “Touch screens may be too costly to enable gesture inputs across large surfaces like counters and refrigerators, while buttons only allow one kind of input at predefined locations.”

VPUs do a good job of sensing activities and events happening in a well-defined area. This allows the functionality that comes with a smart object without the privacy concerns of a standard microphone that senses the whole room, for example.

Yasha Iravantchi

The use of microphones and cameras for audio and gesture-based inputs has been a previous strategy to get around these restrictions, but the authors claim that this approach has limited applicability in the real world.

“When there’s a lot of background noise, or something comes between the user and the camera, audio and visual gesture inputs don’t work well,” Iravantchi said.

The sensors that enable SAWSense are contained in a hermetically sealed room that totally cancels even very loud ambient noise in order to get around these restrictions. The surface-acoustic waves can only enter through a mass-spring mechanism, which keeps them from ever interacting with sounds from outside the housing.

The technology can record and categorize the events along an object’s surface when used in conjunction with the team’s signal processing software, which creates features from the data before putting it into the machine learning model.

“There are other ways you could detect vibrations or surface-acoustic waves, like piezo-electric sensors or accelerometers,” said Alanson Sample, U-M associate professor of electrical engineering and computer science, “but they can’t capture the broad range of frequencies that we need to tell the difference between a swipe and a scratch, for instance.”

The high fidelity of the VPUs allows SAWSense to identify a wide range of activities on a surface beyond user touch events. For instance, a VPU on a kitchen countertop can identify electronic devices like a blender or microwave that are in use, as well as detect cutting, stirring, blending, or whisking.

“VPUs do a good job of sensing activities and events happening in a well-defined area,” Iravantchi said. “This allows the functionality that comes with a smart object without the privacy concerns of a standard microphone that senses the whole room, for example.”

SAWSense could provide more precise and sensitive inputs when many VPUs are combined, especially for inputs that need a sense of distance and space, such as buttons on a remote control or the keys on a keyboard.

The researchers are also investigating the application of VPUs for medical sensing, which includes picking up fine noises like the sounds of moving joints and connective tissues. The high-fidelity audio data VPUs provide could enable real-time analytics about a person’s health, Sample says.

The research is partially funded by Meta Platforms Inc.

With the help of U-M Innovation Partnerships, the team has filed for patent protection and is looking for partners to help commercialize the concept.