According to a team of Penn State researchers, a better understanding of how astrocytes function and can be emulated in the physics of hardware devices may result in artificial intelligence (AI) and machine learning that autonomously self-repairs and consumes far less energy than current technologies.

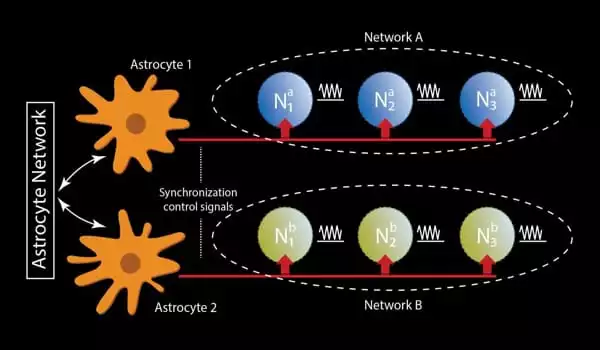

Astrocytes are named after their star shape and are a type of glial cell, which are support cells for neurons in the brain. They are essential for brain functions such as memory, learning, self-repair, and synchronization.

“This project arose from recent computational neuroscience observations, as there has been a lot of effort and understanding of how the brain works, and people are trying to revise the model of simplistic neuron-synapse connections,” said Abhronil Sengupta, assistant professor of electrical engineering and computer science. “It turns out that there is a third component in the brain, the astrocytes, which constitute a significant portion of the cells in the brain, but its role in machine learning and neuroscience has been underappreciated.”

Simultaneously, the AI and machine learning fields are exploding. According to the analytics firm Burning Glass Technologies, demand for AI and machine learning skills is expected to grow at a compound annual growth rate of 71% by 2025. However, as the use of AI and machine learning grows, there is a problem: they consume a lot of energy.

This project arose from recent computational neuroscience observations, as there has been a lot of effort and understanding of how the brain works, and people are trying to revise the model of simplistic neuron-synapse connections.

Abhronil Sengupta

“An often-underestimated issue of AI and machine learning is the amount of power consumed by these systems,” Sengupta said. “For example, a few years ago, IBM attempted to simulate a cat’s brain activity and ended up consuming a few megawatts of power. And if we just extended this number to simulate human brain activity on the best supercomputer available today, the power consumption would be even higher than megawatts.”

All of this power consumption is due to the complex dance of switches, semiconductors, and other mechanical and electrical processes that occur in computer processing, which greatly increases when the processes are as complex as those required by AI and machine learning. Neuromorphic computing, which simulates brain functions, is one possible solution. Researchers are interested in neuromorphic computing because the human brain has evolved to use much less energy for its processes than a computer, so mimicking those functions would make AI and machine learning more energy-efficient.

Another brain function with neuromorphic computing potential is the brain’s ability to self-repair damaged neurons and synapses.

“Astrocytes play a critical role in self-repair of the brain,” Sengupta explained. “When we try to create these new device structures, we try to create a prototype artificial neuromorphic hardware, which is characterized by a large number of hardware-level flaws. So, based on how astrocyte glial cells cause self-repair in the brain, we may be able to draw insights from computational neuroscience and apply those concepts to possibly cause self-repair of neuromorphic hardware to repair these faults.”

Sengupta’s lab primarily focuses on spintronic devices, which are types of electronics that process information by spinning electrons. The researchers investigate the magnetic structures of the devices as well as how to make them neuromorphic by simulating various neural synaptic functions of the brain in the intrinsic physics of the devices.

This study was part of a larger study published in the journal Frontiers in Neuroscience in January. That investigation led to the study that was recently published in the same journal. “When we started working on self-repair aspects in the previous study, we realized that astrocytes also contribute to temporal information binding,” Sengupta explained.

Temporal information binding is the process by which the brain makes sense of relationships between separate events occurring at different times, as well as making sense of these events as a sequence, which is an important function of AI and machine learning.

“It turns out that the magnetic structures we were working with in the previous study can be synchronized together through various coupling mechanisms, and we wanted to explore how these synchronized magnetic devices can mimic astrocyte-induced phase coupling, going beyond prior work on solely neuro-synaptic devices,” Sengupta explained. “We want the devices’ intrinsic physics to mimic the astrocyte phase coupling that you have in the brain.”

To better understand how this could be accomplished, the researchers created neuroscience models, including astrocyte models, to determine which aspects of astrocyte functions would be most relevant to their research. They also created theoretical models of possible spintronic devices.

“We needed to understand the device physics, which required a lot of theoretical modeling of the devices,” Sengupta explained. “After that, we looked into how we could develop an end-to-end, cross-disciplinary modeling framework that included everything from neuroscience models to algorithms to device physics.”

Developing such energy-efficient and fault-tolerant “astromorphic computing” could pave the way for more sophisticated AI and machine learning work to be performed on power-constrained devices like smartphones.

“AI and machine learning are revolutionizing the world around us every day,” Sengupta said. “You can see it from your smartphones recognizing pictures of your friends and family to machine learning’s huge impact on medical diagnosis for various types of diseases.” “At the same time, research into astrocytes and the types of self-repair and synchronization functions they can enable in neuromorphic computing is still in its early stages. These types of components have a lot of potential applications.”